Are online IQ tests accurate? The kind answer is: some are useful, and none should be treated as a verdict. A good one can place your reasoning performance in the right neighbourhood, much as a bathroom scale can give a fair reading without knowing the history of your body. The art is knowing what the number can bear, and what it can't.

Are online IQ tests accurate? The honest answer

Yes, some online IQ tests are accurate enough for curiosity, self-reflection and a rough percentile estimate. No online test should be treated like a full assessment from a psychologist.

That difference matters because the word accurate carries a quiet promise. A well-built test samples reasoning skills, compares your answers with a norm group, and reports an IQ-style score with some humility. A weak one gives nearly everyone a pleased little shock and hopes the number will make them buy a certificate.

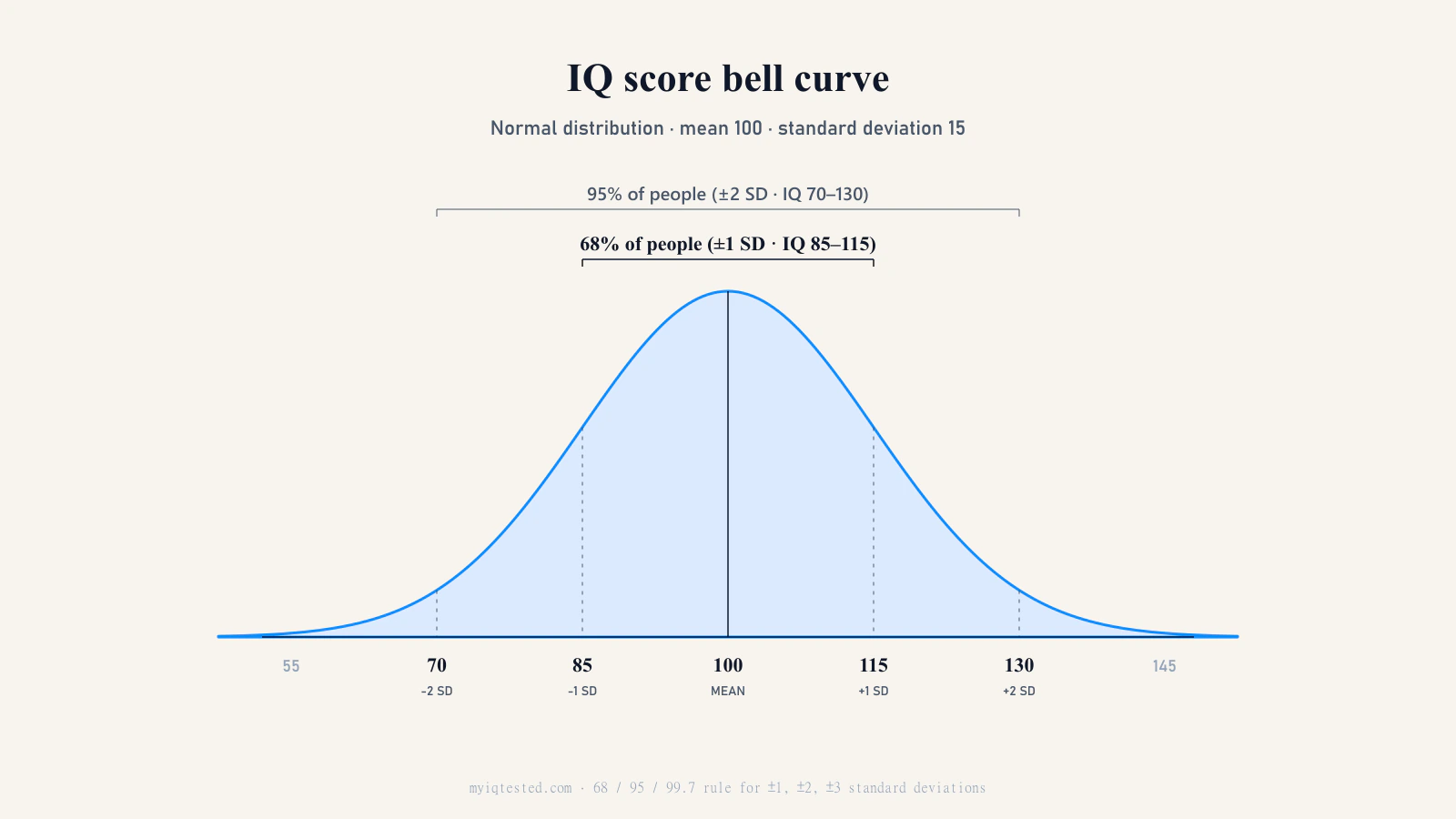

So the honest answer is practical: a credible online IQ test gives an estimate, not a clinical conclusion. Your result is better read as a band, not a dot on the wall. If you score 120 online, the careful reading is that you're probably in the high-average to superior area, not that your mind has been measured forever at exactly 120. For bands and percentiles, see our IQ score chart.

Accuracy, reliability and validity are three different things

People use accurate as one word for several worries. Did the test measure reasoning, or only puzzle familiarity? Would you get a similar score tomorrow morning? Does the number mean anything outside the browser tab?

Psychometrics separates those questions for a good reason.

Accuracy is closeness. It asks whether the score you see is near the score you'd expect from a high-quality, well-normed measure taken under proper conditions. If your standing is around 115 and a test reports 117, that's a decent estimate. If it reports 138 because the norms are stale or the scoring is generous, the number has dressed up wishful thinking as measurement.

Reliability is consistency. If the same person takes the same test, or a genuinely comparable form, under similar conditions, the score should stay close. The 2014 Standards for Educational and Psychological Testing describe reliability as consistency across replications of the testing procedure, while treating measurement error as part of real testing rather than a rare accident.1

Validity is about meaning. It asks whether evidence and theory support the interpretation of a score for a specific use.1 In IQ testing, that can mean links with established batteries such as the WAIS-IV, with school achievement, with work performance, or with other measures the test should sensibly relate to.

These three qualities can pull apart.

A test can be reliable but invalid. It gives the same wrong answer twice. Picture a 12-question vocabulary quiz sold as a complete IQ test. A word-loving adult may score high every time, but the test has barely touched spatial reasoning, numerical reasoning or nonverbal problem-solving.

A test can also be valid in groups but noisy for one person. Across thousands of people, it may predict achievement quite well. For one tired person taking it after a bad night's sleep, the score may sit several points away from their usual level.

And many short tests work better in the middle than at the tails. Above 130 or below 70, they often have too few hard or easy items to sort people cleanly. That's not a moral failing. It's a measurement problem.

What the ICAR validation data actually shows

The best public answer to whether free online IQ tests can be accurate isn't a slogan. It's ICAR, the International Cognitive Ability Resource.

ICAR is a public-domain item bank for cognitive ability research.2 It exists partly because many professional intelligence tests are proprietary, expensive and hard for researchers to use at large scale. In their 2014 paper in Intelligence, David Condon and William Revelle introduced ICAR as a public-domain cognitive measure designed for large-scale and remote administration.3

The original ICAR work used item types such as letter-number series, matrix reasoning, verbal reasoning and three-dimensional rotation. Its first large online study included nearly 97,000 participants across 199 countries, an unusually large sample for cognitive test development.3

The key question is whether ICAR scores line up with established measures. They do, though not perfectly. Condon and Revelle reported a corrected correlation of about r = .75 with Raven's Advanced Progressive Matrices, a classic nonverbal reasoning test.3 Young and Keith later compared the ICAR16 with the WAIS-IV in a university sample and found r = .81 between observed ICAR16 scores and WAIS-IV Full Scale IQ, with a stronger latent-factor relation in their model.4

Plainly put, a correlation around .75 to .85 says the tests are tracking much of the same ability. It also says they aren't interchangeable person by person. Someone can be stronger verbally than spatially. Someone else may rush matrices, know number series too well, or take the test in a kitchen while a child is asking where the scissors went.

So ICAR gives real support for online measurement. It supports a research-grounded screening estimate. It doesn't turn a home sitting into a full WAIS-IV or Stanford-Binet assessment. For more background on scoring and norming, see how IQ tests work.

Why a single score is a band, not a point

Every IQ score contains measurement error. Not because the test is foolish. Because human performance wobbles.

The Standard Error of Measurement, or SEM, is the usual way test theory describes that wobble. The Standards for Educational and Psychological Testing explain that an observed score fluctuates around a hypothetical true score, and that SEM summarises the expected error in the testing procedure.1

Even respected clinical batteries have bands around their scores. WAIS-IV reviews and technical material report very high reliability, with Full Scale IQ test-retest reliability around .96, but not zero error.5 In practical terms, Wechsler-style composite SEMs are often only a few points, roughly 2.4 to 3.5 points for many composite scores.

Online testing adds ordinary life to the equation: no examiner, no controlled room, unknown effort, different screens, interruptions, timing quirks, and the small indignities of taking a reasoning test while the washing machine finishes its spin cycle. Under good conditions, a single online result is usually safest as a ±5 point band. If you were tired, anxious, rushing or half-interrupted, ±10 is the kinder and more honest reading.

A result of 120 is best held as around 115 to 125. A result of 102 is around average. A result of 132 may place you near the top few percent, but it's worth confirming if the score will matter. Use the IQ score chart for a visual band and percentile reference, then read what your IQ score means for a fuller interpretation.

The Flynn Effect adds a slow drift across decades. Across the twentieth century, IQ test performance rose over time, with meta-analytic estimates varying by domain and period.6 Old norms can make scores look cleaner than they are.

How an online test differs from a clinically administered one

A clinical IQ assessment isn't just a longer web quiz in a quieter font. It's a different social and measurement setting.

A trained psychologist controls the room, checks instructions, watches effort, handles start and stop rules, and scores responses using standard procedures. A full adult battery often takes about 60 to 90 minutes and may combine verbal comprehension, perceptual reasoning, working memory, processing speed and nonverbal tasks. WAIS-IV reviews report an average core administration time around 67 minutes.5

The Stanford-Binet 5 is similar in spirit: a broad, individually administered test with verbal and nonverbal routes across several cognitive factors. Its publisher reports Full Scale, Nonverbal and Verbal IQ reliabilities in the .95 to .98 range.7

An online test makes a different trade. It usually has a shorter item set, browser scoring, no examiner, no controlled room, and a fixed path through the questions. You gain access, privacy and speed. You lose supervision, behavioural observations and tight control over conditions.

For high-stakes decisions, those losses matter. A school placement, legal evaluation or disability assessment can't rest on a quick home estimate. The setting has too many open doors. For self-knowledge, though, the trade is often acceptable. If you want to know whether your reasoning performance is roughly average, high average or unusually high, a well-built online test can help.

A short IQ test can be useful. It just can't map a whole person.

How to tell a credible online IQ test from a flattering one

A credible online IQ test should leave you calmer, not dazzled. It should explain its method plainly, admit uncertainty and resist the temptation to turn your result into a personality horoscope.

Look for six signals.

- Named methodology with citations. The site should say which item bank, scoring model or norming approach it uses.

- A named author, team or institution. Anonymous test pages deserve suspicion, especially when they ask for money.

- Public norms or validation evidence. You want sample size, scoring method and correlations with known measures, not just a sleek gauge.

- Results before payment. Paying for extras is one thing. Hiding the score until checkout is another.

- Honest limits. A good test tells you when to seek a psychologist.

- No guaranteed extremes. If every friend gets 128 to 145, the test is probably selling flattery.

The red flags are equally plain: a paywall before any result, claims that a web test replaces professional assessment, no method page, no norm sample, no named author, old-looking score tables, and visible score inflation where nearly everyone lands far above 100.

One more question helps. Does the site talk about uncertainty? If it gives you one grand number with no band, it is treating measurement like theatre. Good testing is less glamorous than that. It has error bars, caveats and the modest decency to say what it doesn't know.

Is myiqtested.com accurate? An honest answer

myiqtested.com is built for one purpose: a free, transparent estimate of cognitive test performance.

The test draws from the ICAR public-domain item bank, the strongest open cognitive testing project available to non-clinical users.2 It uses 33 items across abstract, verbal, numerical and spatial reasoning. It is browser-scored, and you don't need to pay before seeing your result.

The evidence behind that choice is not a vague trust-us claim. ICAR validation studies show strong relations with established cognitive measures. Condon and Revelle reported about r = .75 with Raven's Advanced Progressive Matrices.3 Young and Keith found r = .81 between observed ICAR16 scores and WAIS-IV Full Scale IQ in their sample.4 Dworak, Revelle, Doebler and Condon also describe ICAR as an open-source tool now used across many individual-differences studies.8

That is a good basis for a screening estimate. It is not a licence to overclaim.

One sitting at home should be treated as a ±5 to ±10 point band, not a perfect point estimate. Illness, anxiety, poor sleep, multitasking, repeated exposure to similar items, or simply a noisy room can move the score. A person is not less intelligent because the doorbell rang during a spatial rotation item. They were interrupted during a task.

myiqtested.com is not a clinical assessment. It isn't appropriate for educational placement, occupational gating, legal proceedings or medical decisions. It is free, transparent, based on published cognitive items, and used as a self-knowledge instrument by hundreds of thousands of people.

That's the promise: useful measurement, held with humility.

When to seek a clinical assessment instead

Use a licensed psychologist and a full WAIS-IV or Stanford-Binet battery when the result will affect someone's rights, services, schooling, work or treatment.

That includes gifted-and-talented placement, educational accommodations, learning-disability evaluation, ADHD evaluation, occupational screening, legal proceedings, disability claims, neuropsychological questions, or any clinical or medical decision. The Standards for Educational and Psychological Testing are clear in spirit: the level of reliability and validity evidence should rise as the consequences of a decision rise.1

Formal memberships can also require supervised testing. Mensa International describes membership as based on scoring in the top 2% on an approved intelligence test.9 An online score may tell you whether trying is worthwhile. It usually won't serve as formal proof.

For self-knowledge, comparison with friends, curiosity, or a starting point for reflection, an ICAR-based online score is fit for purpose. It can give you a useful sketch of how you performed on reasoning tasks that day. For a life decision, get a professional assessment.

If you're wondering why scores can shift over time, and what practice, learning and health can reasonably change, read can you improve your IQ?. The number deserves context. So do you.